The adoption of AI has more than doubled since 2017, according to a report by McKinsey. This stat highlights how businesses continue to invest in and implement AI into their operations. However, a study conducted by the Boston Consulting Group reveals a sobering reality; only 10% of organizations, it seems, achieve significant financial returns from their AI initiatives.

To fuel AI models capable of generating new business insights and operational efficiencies, enterprises must amass vast volumes of data stored in cloud object stores. However, for most companies, the cost associated with storing such massive datasets at the desired scale becomes prohibitive, leading to a dilemma that hampers the potential of AI-driven outcomes.

The limitations imposed by the high costs of data storage force many organizations to archive or even delete significant volumes of valuable data. This constraint not only undermines the potential performance of AI models, but also diminishes the overall effectiveness and accuracy of AI outcomes.

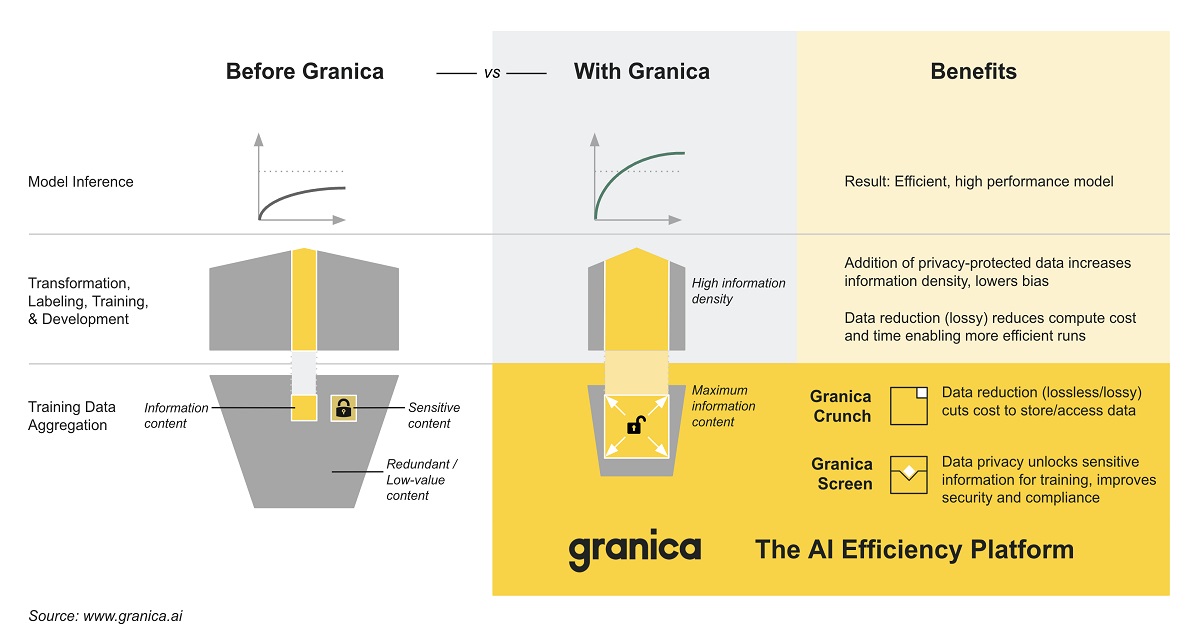

The inability to store and access critical data at scale is a pervasive challenge faced by enterprises, hindering their ability to fully leverage the benefits of AI technology. That said, Granica emerged to change that with its AI efficiency platform, bringing novel, fundamental research in data-centric AI to the commercial market as an enterprise solution.

By leveraging Amazon S3 and Google (News - Alert) Cloud Storage, AI teams can now extract the utmost value from their expanding reservoirs of training data. Granica facilitates the economical capture, storage and utilization of AI data, empowering enterprises to enhance model performance and achieve superior business outcomes.

Additionally, Granica prioritizes the protection of sensitive information within object data, ensuring its secure utilization in AI and analytics, and bolstering overall data security. While initially focusing on data efficiency, Granica's future roadmap encompasses driving efficiency across the entire AI pipeline.

The two main benefits of Granica’s AI efficiency platform:

- Granica Crunch, the data reduction service for enterprise AI. It provides Byte-granular data reduction containing novel compression and deduplication algorithms which losslessly reduce the physical size of enterprise AI training data such as sensor, image and text files, reducing costs to store and transfer objects in Amazon S3 and GCS (News - Alert) by up to 80%. It also reduces write costs by up to 90% by intelligently batching write requests and optimizing other storage operations.

- Granica Screen, the data privacy service for enterprise AI. It provides Byte-precise detection for high recall and high precision identification and protection of sensitive data, including PII contained in structured, semistructured and unstructured text data. Granica Screen enables enterprises to improve their data security posture and prevent breaches, safely use their data for AI and analytics use cases and simplify regulatory compliance. It is built to enable privacy-enhanced computing. Granica Screen is available via an Early Access Program.

“Our mission is to enable enterprise AI teams to maximize the value of their data and keep much more, if not all, of their AI data ‘hot.’ This is the key to unlocking the transformative potential of artificial intelligence and machine learning,” said Rahul Ponnala, co-founder and CEO of Granica. “Data fuels the AI engines that are quickly becoming essential to modern commerce, science and everyday life. Just look at the sudden explosion in generative AI tools to get a sense of the future reach of this technology.”

Granica gives C-level executives in data-driven industries the means to boost profitability and foster innovation by pursuing AI efficiency, thereby complementing their existing optimization efforts.

Edited by Alex Passett