With seemingly “E”verything moving to the cloud, a significant amount of attention has been paid to what can and should be moved, when, where, why, how and by whom. However, not much has been written about the challenges associated with the actual user experience posed by moving information and apps from a dedicated resource to a shared one. After all, many of the benefits of cloud from scalability to availability to cost savings are rooted in increased sharing. However, with sharing comes the potential for service degradation caused by latency, packet loss, jitter and a host of other issues.

This degradation is a significant issue for service providers as they look to meet their SLAs and use performance as a means for competitive differentiation in hotly contested markets. It could portend challenges with cloud adoption rates down the road which could be successfully dealt with based on appreciation of pain points and by taking appropriate steps to eliminate them sooner rather than later.

How to maintain service quality in the cloud is the subject of an insightful new book by Randee Adams, Consulting Member of Technical Staff, and Eric Bauer, Reliability Engineering Manager, Alcatel-Lucent (News ![]() - Alert) entitled, Service Quality of Cloud-Based Applications, and a recent TechZine article that highlights key findings and recommendations of the authors exhaustive analysis of the issues.

- Alert) entitled, Service Quality of Cloud-Based Applications, and a recent TechZine article that highlights key findings and recommendations of the authors exhaustive analysis of the issues.

I spoke with co-author Bauer, who walked me through why the entire cloud ecosystem needs to understand the realities of cloud-based service degradation and how to address them.

“Let’s start with the fact that, first and foremost, customers’ expectations are that, regardless of where their applications reside – in the cloud or on traditional native hardware – their experience is of the highest quality. The user does not care about infrastructure. He cares about things like availability, reliability, responsiveness, retainability (for instance in streamed video sessions) ease-of-use, security, and utility. This is why getting the cloud-based user experience to meet or exceed expectations is non-trivial,” Bauer noted.

He added that, “Realizing that, from a customer experience perspective, there are multiple Key Quality Indicators (KQIs) that need to be recognized and standardized in order for service providers to have metrics for the quality of experience they are delivering, which vary depending on the application invoked, is critical. This is not just for service providers, in terms of monitoring performance or remediating issues but, obviously, also in terms of their ability to meet Service Level Agreements (SLAs).”

The unique challenges of service quality and the cloud— impairments to QoE

The new book builds on the authors popular 2010 and 2011 works (Beyond Redundancy, and Reliability and Availability of Cloud Computing) to concentrate on quality of experience (QoE). It concentrates the complexities of cloud-based QoE, which is influenced by such things as virtualized compute, memory, storage and networking resources delivered by the cloud service provider that hosts the execution of the application software. It is also influenced by the cloud-based technology components that contribute to the application service.

As Bauer said, “These resource-facing capabilities bring additional impairment risks into play. An application may have to contend with inconsistent infrastructure resource delivery due to resource contention or virtual machine (VM) failures such as stalls and premature releases. These impairments can impact customers by degrading application service quality.”

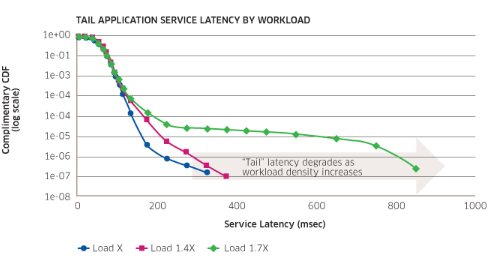

The big risk to service degradation in the cloud is infrastructure service latency. In non-virtualized applications, the fastest and slowest query response times aren’t markedly different. However, the same app executing on virtualized infrastructure, “often experiences a knee in the service latency distribution and a tail after which operations have significantly greater service latency. Users who encounter particularly slow response times in the distribution’s tail may lose patience with the virtualized application.” Another way of saying this is that they have exceeded their KQI threshold.

This latency impairment is demonstrated in the graphic below.

As Bauer explained, “Latency is not the only issue that creates impairment issues when moving to the cloud.” Others include:

Impairments created by shifting accountabilities

A cloud service provider may bring together software, networking and as-a-service components from multiple vendors to realize an application service, making tracing problems and determining responsibility for fixing them problematic. These can be addressed through standardized cloud infrastructure service quality metrics to keep everybody honest and accountable.

Impairments created by new partnerships

In addition to application software, application instances executing on cloud infrastructure rely on critical components provided through partnerships to deliver acceptable QoE. These components include: virtual machines, connectivity-as-a-service (which are vulnerable to packet loss, packet latency, packet jitter and unavailability impairments), and technology components offered as-a-service, which can shorten an application’s time to market and reduce operating expense. The authors add that capabilities like Database-as-a-Service (DBaaS) and Load-Balancing-as-a-Service (LBaaS) allow cloud service providers to ‘buy’ a mature technology component service rather than ‘building’ private and application-specific instances. However, these offerings are vulnerable to service reliability, latency, quality and unavailability impairments as well.

Help and hope are available

While the book contains over 150 illustrations of problems, for readers, the good news is the authors also provide recommendations as to how the challenges they present can be overcome.

Understand that different applications have different customer-facing service quality sensitivities relative to cloud service provider impairments.

Architect applications to minimize the impact of cloud infrastructure impairments on end customers. In addition, test applications with likely infrastructure impairments to ensure that customers consistently receive acceptable service quality.

Recognize that good fences make good neighbors. Agree on SLOs (Service Level Objectives) for all cloud infrastructure KQIs so that fault isolation can be expedited when applications encounter user service quality impairments. Better definition of service boundaries and requirements will make it easier to pinpoint problems and determine who has ownership for fixing root causes.

Bauer also noted that, in an effort to help service providers deal with the challenges cited, ALU, in partnership with AT&T, has proposed that the industry (e.g., ETSI NFV, QuEST Forum (News - Alert)) standardize quantitative KQIs for application-facing services exposed by IaaS/NFV infrastructure to accelerate maturation of cloud infrastructure service quality across the industry.

Bauer concluded by highlighting the fact that, “Increased utilization means somebody waits. It is a time-shared system and not dedicated access. The task at hand is to assure that waiting is imperceptible to the user or they will go elsewhere.”

A good way to look at how to assure quality of service in the cloud is to think of what are three pillars for either avoiding impairments completely, or mitigating their impact as fast as possible. Visibility, metrics and accountability are all part of the solution for achieving a QoE glide path that can be a win/win for all members of the cloud ecosystem and their users. We may be early in the learning curve how best to optimize impairment issues, but reading the book and getting involved with the ALU/AT&T (News ![]() - Alert) efforts with ETSI is a way to stay informed and help shape the future.

- Alert) efforts with ETSI is a way to stay informed and help shape the future.

Edited by Maurice Nagle